There’s been a lot of armchair analysis about various models being used to predict outcomes of COVID-19. For those of us who have built spatial and statistical models, all of this discussion brings to mind George Box’s dictum, “All models are wrong, but some are useful”—or useless, as the case may be.

The problem with data-driven models, especially when data is lacking, can be easily explained. First of all, in terms of helping decision makers make quality decisions, statistical hypothesis testing and data analysis is just one tool in a large tool box.

It’s based on what we generally call reductionist theory. In short, the tool examines parts of a system (usually by estimating an average or mean) and then makes inferences to the whole system. The tool is usually quite good at testing hypotheses under carefully controlled experimental conditions.

For example, the success of the pharmaceutical industry is, in part, due to the fact that they can design and implement controlled experiments in a laboratory. However, even under controlled experimental procedures, the tool has limitations and is subject to sampling error. In reality, the true mean (the true number or answer we are seeking) is unknowable because we cannot possibly measure everything or everybody, and model estimates always have a certain amount of error.

These Models Are Unreliable

Simple confidence intervals can provide good insight into the precision and reliability, or usefulness, of the part estimated by reductionist models. With the COVID-19 models, the so-called “news” appears to be using either the confidence interval from one model or actual estimated values (i.e., means) from different models as a way of reporting a range of the “predicted” number of people who may contract or die from the disease (e.g., 60,000 to 2 million).

Either way, the range in estimates is quite large and useless, at least for helping decision makers make such key decisions about our health, economy, and civil liberties. The armchair analysts’ descriptions about these estimates show how clueless they are of even the simplest of statistical interpretation.

The fact is, when a model has a confidence interval as wide as those reported, the primary conclusion is that the model is imprecise and unreliable. Likewise, if these wide ranges are coming from estimated means of several different models, it clearly indicates a lack of repeatability (i.e., again, a lack of precision and reliability).

Either way, these types of results are an indication of bias in the data, which can come from many sources (such as not enough data, measurement error, reporting error, using too many variables, etc.). For the COVID-19 models, most of the data appears to come from large population centers like New York. This means the data sample is biased, which makes the entire analysis invalid for making any inferences outside of New York or, at best, areas without similar population density.

It would be antithetical to the scientific method if such data were used to make decisions in, for example, Wyoming or rural Virginia. While these models can sometimes provide decision makers useful information, the decisions that are being made during this crisis are far too important and complex to be based on such imprecise data. There are volumes of scientific literature that explain the limitations of reductionist methods, if the reader wishes to investigate this further.

Despite Unreliability, Models Influence Huge Decisions

Considering the limitations of this tool under controlled laboratory conditions, imagine what happens within more complex systems that encompass large areas, contain millions of people, and vary with time (such as seasonal or annual changes). In fact, for predicting outcomes within complex and adaptive and dynamic systems, where controlled experiments are not possible, data is lacking, and large amounts of uncertainty exist, the reductionists’ tool is not useful.

Researchers who speak as if their answers to such complex and uncertain problems are unquestionable and who politicize issues like COVID-19 are by definition pseudo-scientists. In fact, the scientific literature (including research from a Nobel Prize winner) shows that individual “experts” are no better than laymen at making quality decisions within systems characterized by complexity and uncertainty.

The pseudo-scientists want to hide this fact. They like to simplify reality by ignoring or hiding the tremendous amount of uncertainty inherent in these models. They do this for many reasons: it’s easier to explain cause/effect relationships, it’s easier to “predict” consequences (that’s why most of their predictions are wrong or always changing), and it’s easier to identify “victims” and “villains.”

They accomplish this by first asking the wrong questions. For COVID-19, the relevant question is not, “How many people will die?” a divisive and impossible question to answer, but “What can we do to avoid, reduce, and mitigate this disease without destroying our economy and civil rights?”

If the Assumptions Are Wrong, Everything Else Is

Secondly, pseudo-scientists hide and ignore the assumptions inherent in these models. The assumptions are the premise of any model; if the assumptions are violated or invalid, the entire model is invalid. Transparency is crucial to a useful model and for building trust among the public. In short, whether a model is useful or useless has more to do with a person’s values than science.

The empirical evidence is clear: what’s really needed is good thinking by actual people, not technology, to identify and choose quality alternatives. Technology will not solve these issues and should only be used as aids and tools (and only if they are transparent and reliable as possible).

What is needed, and what the scientific method has always required but is nowadays often ignored, is what is called multiple working hypotheses. In laymen’s terms, this simply means that we include experts and stakeholders with different perspectives, ideas, and experiences.

Models Cannot Give Answers Alone

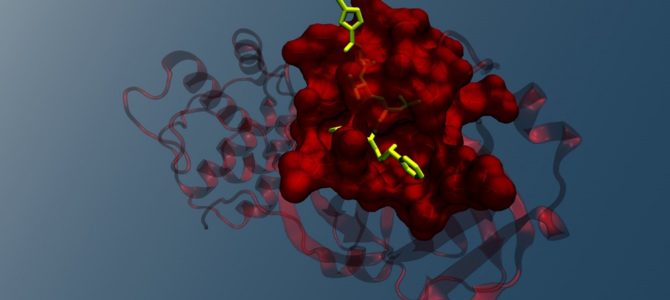

The type of modeling that is needed to make quality decisions for the COVID-19 crisis is what we modelers call participatory scenario modeling. This method uses Decision Science tools like Bayesian networks and Multiple Objective Decision Analysis that explicitly link data with the knowledge and opinions of a diverse mix of subject matter experts. The method uses a systems, not a reductionist, approach and seeks to help the decision maker weigh the available options and alternatives.

The steps are: frame the question appropriately, develop quality alternatives, evaluate the alternatives, and plan accordingly (i.e., make the decision). The key is participation from a diverse set of subject-matter experts from interdisciplinary backgrounds working together to build scenario models that help decision makers assess the decision options in terms of probability of the possible outcomes.

Certain models, such as COVID-19, require a diverse set of experts, whereas climate change models require participation from stakeholders and experts. The participatory nature of the process makes assumptions more transparent, helps people better understand the issues, and builds trust among competing interests.

For COVID-19, we likely need a set of models for medical and economic decisions that augment final decision-support models that help the decision makers weigh their options. No experienced decision maker would (or should) rely on any one model or any one subject-matter expert when making complex decisions with so much uncertainty and so much at stake.

Pseudo-scientists only allow participation from subject-matter experts who agree with their agenda. In other words, they often rig the participatory models. I’m not saying this is occurring with COVID-19, but it has happened before and could happen again.