A study claiming abortion restrictions probably cause suicide is extremely flawed.

At the end of the year, JAMA Psychiatry released a study claiming abortion restrictions probably cause suicide. While many academic papers go unread or uncited behind paywalls, this paper was immediately promoted by various media outlets including The Guardian, NBC News, and The Hill.

The study’s results were politically relevant — the authors claimed abortion restrictions raised suicide rates of young women by more than 5 percent. The authors were affiliated with the University of Pennsylvania and Penn Medicine, and JAMA Psychiatry is one of the world’s most influential psychiatry journals. If relatively weak restrictions from the Roe v. Wade era could cause suicide among young women to rise, how much more would suicide spike as some states imposed actual abortion bans following Dobbs v. Jackson Women’s Health Organization?

But the paper is extremely flawed. The authors lack the majority of the data they should have, data the authors claim is unavailable from the Centers for Disease Control and Prevention (CDC) is in fact available, the pattern of missing data leads to a biased overestimate of suicide rates, and the use of statistical controls was poor.

Drawing Conclusions After Throwing Out Half the Data

The authors’ basic strategy was to look at the suicide rates of young women by state and year and compare them to abortion restrictions across states and years. They find that the presence of laws that impose regulatory burdens on abortion providers is associated with a 5 percent higher suicide rate for young women for the period 1976-2016. They show a similar increase in suicide rates using a weighted index of abortion policy for the period 2006-2017. Because the authors find no relationship between abortion restrictions and suicide among older women nor with motor vehicle deaths, they reason it’s likely that restricting abortion increases suicide among young women who might wish to receive one.

The authors report that a majority of the data they wanted to use in their analysis was missing. The results the authors display in Table 1 in their paper are based upon data from 50 states for 1976-2016. If all the data was available, there would be 2,050 different observations or data points on suicide rates for women ages 20-34 over a year per state. The authors have 1,022 observations. That is about half the relevant observations.

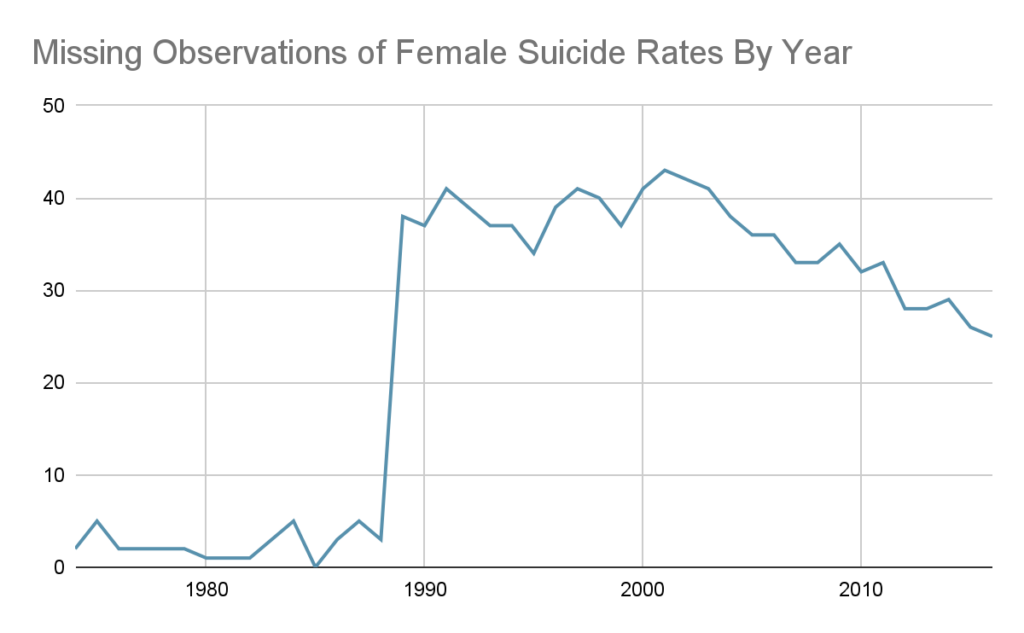

Observations are not randomly missing from collected data. In the online supplemental materials, you can see the suicide rate data these authors have by state and year. Here are the missing observations by year.

Why does data availability plummet? Because, for years after 1989, the CDC suppresses “[s]ub—national statistics representing fewer than ten persons” for privacy reasons.

When the number of suicides for young women in a given state in a year are low, the data is suppressed. When suicides and suicide rates are lower, they are more likely to be missing from the data. All of these low suicide rates are dropped, so conclusions about suicide rates will overstate the real suicide rate.

Anti-abortion laws become more common over time as available data declines, so the authors are disproportionately overstating suicide rates for years with restrictive abortion laws.

Consider Missouri. The authors record Missouri as first enforcing a restrictive abortion law in 1988. Before 1988, the authors have data on Missouri for every year. After 1988, they are missing data for more than half the years their study covers. This overstates suicide rates in Missouri for years in which Missouri had restrictive abortion laws.

Missing Data Can Be Found

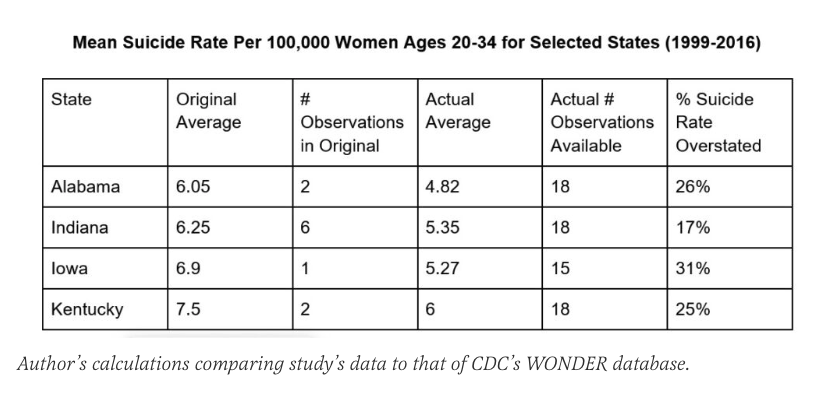

But the data for many of these years is not actually missing. I easily obtained them from CDC WONDER, the government database the authors said they used. They claim to only have data for Alabama for two years between 1999 and 2016, yet the CDC provides data on suicide rates for Alabama for all years 1999-2016.

When contacted about the discrepancy, lead author Jonathan Zandberg replied that the researchers first obtained suicide rates for women between 20-24 and then for women between 25-34. If data was missing for either group due to the number of suicides being too low, they threw the whole observation out. This reduces the amount of data available and biases suicide rate measures upwards. Look at this table below, which shows average suicide rates between 1999 and 2016 using the authors’ data versus all data actually available from the CDC and demonstrates their estimates are too high. The more missing observations, the worse the bias. Take the following states, where the authors wildly understate the available data.

The authors are claiming that there is an expected 5 percent increase in suicide rates in states that have restrictive abortion laws. But the upward bias in their recorded suicide rates for some states is multiple times the estimated effect of abortion restrictions on suicide rates. This data cannot demonstrate that abortion restrictions are associated with suicide increases because the bias in their data is so extreme.

Because the authors throw out so much data, there are many states for which the authors literally have no data after 1989, even though such data is available! The study’s authors did not respond to questions about the biased data leading to overstated suicide rates, although they did respond to questions about why so much data was missing. The co-author, Ran Barzilay noted that all studies have limitations. The lead author, Zandberg, quoted the original article’s admission that “we relied on data on annual suicide rates released by the CDC. Accordingly, some data were not available, which could have led to an ascertainment bias.”

Acknowledging the risk of bias does not address the obvious and substantial bias. The data availability was also much less limited than the authors claim.

The missing data and biased data are not the only problems.

Bad Controls for Political Environment

Beyond examining whether there is a link between specific anti-abortion laws and suicide rates, the authors also examine whether there is a relationship between an index of abortion restrictions from 2006-2017 and suicide rates. This approach also suffers from similar data issues — most of the data is missing in a way that exaggerates suicide rates.

Another issue is their approach to controlling for political differences among states. Since conservative states are more likely to restrict abortion, other factors associated with conservative politics could be responsible for the apparent relationship between suicide and abortion restrictions. White Americans are more Republican than average and more suicidal than average. The authors do not control for the percent of the state population that is white, although they do control for the share of the population that is black. (For statistical reasons, it is a bad idea to try to control for every racial/ethnic category simultaneously.)

They try to control for the political environment of different states, but they do so incorrectly. They controlled “for the annual fraction of Republican senators representing the state at the US Senate.” U.S. senators don’t write or enforce state laws. Even as a crude proxy for overall state politics, this approach is bad and would amount to claiming California and Georgia have the same politics because they both lack Republican senators. Likewise, a southern state having two Democratic senators in 1976 implies different politics from a Midwestern state with Democratic senators today.

The authors ignore a huge share of the data. The data they have is biased. The overestimation of suicide rates occurs in years after 1989. Abortion laws were generally stricter after 1989 and more liberal in the immediate period following Roe v. Wade. Suicide rates are most exaggerated in years when abortion restrictions are more common. They fail to control for obvious state-level confounders.

Trustworthy Medical Journals Would Be Valuable

Abortion restrictions may affect suicide rates, but the JAMA Psychiatry paper should convince no one. In fact, the paper should be retracted.

If we have learned anything from the Covid-19 pandemic, it is that it’s valuable when scientific institutions provide balanced and accurate information on issues of public health — even when issues are politically charged. I hope JAMA Psychiatry does better.