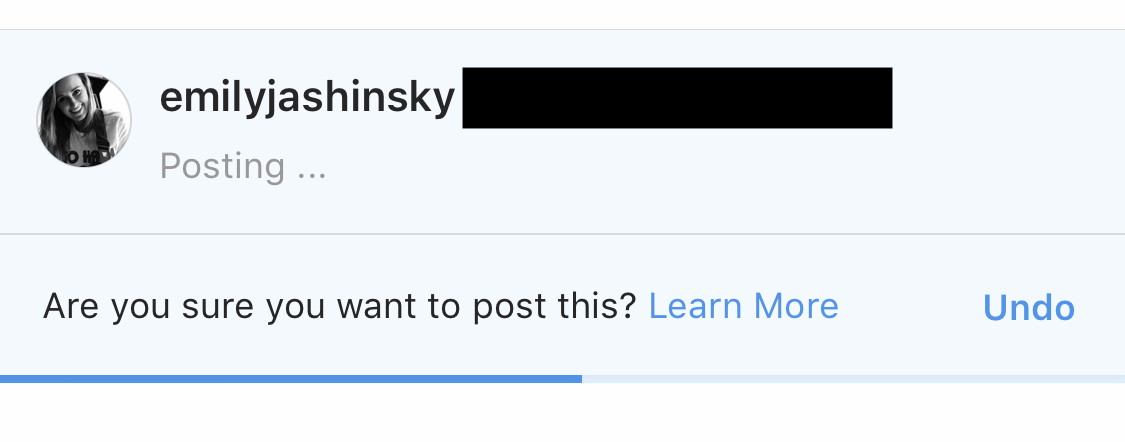

Instagram thinks it has an algorithm capable of identifying “offensive” content and wants artificial intelligence to “intervene” before users post anything objectionable. The platform’s Monday announcement indicates a notification will privately flag comments to their author before they’re actually published.

“In the last few days, we started rolling out a new feature powered by AI that notifies people when their comment may be considered offensive before it’s posted,” said Instagram Head Adam Mosseri, revealing the process is already in motion. “This intervention gives people a chance to reflect and undo their comment and prevents the recipient from receiving the harmful comment notification. From early tests of this feature, we have found that it encourages some people to undo their comment and share something less hurtful once they have had a chance to reflect.”

The AI intervention was announced along with a forthcoming setting that will allow users to “restrict” others without notifying them, meaning comments left by restricted individuals will be visible only to them. The features are being framed as anti-bullying measures by Instagram, as the company grapples with how to protect teenagers from hurtful interactions on the platform.

Since Mosseri said Instagram had already begun “rolling out” the comment feature, I tested it by attempting to leave a mean-spirited comment on a not entirely undeserving friend’s recent picture, and received the following message from Instagram after clicking “post.” The blue bar beneath the question functioned as a countdown clock, giving me several seconds to decide whether to proceed. (I spared my friend the constructive criticism, at least for now.)

It’s creepy, to be sure. AI will effectively be monitoring every word we type in a comment box for “offensive” content, just waiting to chime in with an admonishment. Big Tech wants to do our parenting for us (maybe we should try that too).

In practice, of course, these interventions will almost certainly not be limited to salty teenagers, some of whom will relish the opportunity to trigger the feature. Obviously what Silicon Valley deems “offensive” is overly expansive, sweeping in civil opposition to leftist dogma with legitimate hate speech and bullying.

That said, this may not be the worst manifestation of Big Tech’s language policing. While it will likely further normalize the narrowed boundaries of acceptable expression enforced by our progressive overlords in Silicon Valley, it’s a step above banning the content outright.

Instagram already does this in other ways, like by demoting “inappropriate” content on Explore and hashtag pages. There’s some troubling, anecdotal evidence of the platform targeting conservative content as well. They’ve also banned several radical conspiracy theorists. (For their part, leftists continue protesting Instagram’s nudity policy.)

But if Instagram has research suggesting AI interventions can reduce bullying without censoring expression, there are worse ways for social media giants to police us, even if that’s a statement on how low the bar has fallen.