The National Science Foundation’s Division of Research on Learning in Formal and Informal Settings awarded $2,249,999 to Oakland, California-based nonprofit YR Media — a “media, technology and music training center and platform for emerging BIPOC content creators who are using their voices to change the world” — to teach “underrepresented” and “underserved” youth how to integrate artificial intelligence technologies with critical theory.

Starting in 2019, the grant titled “Innovative approaches to Informal Education in Artificial Intelligence” allocated millions of dollars to YR Media to “research, design, and develop innovative approaches focusing on Artificial Intelligence (AI) for under-represented youth ages 14-24.”

The program, consisting of participants who “are 90% youth of color and 80% low income,” involved partnerships with the MIT Media Lab and Google staff and was “grounded in sociocultural learning theory” predicated on a “theoretical framework” of “Computational Thinking plus Critical Pedagogy.”

Critical pedagogy is an educational framework and broader social cause that is derived from and applies methods emphasized in the broader social philosophy of critical theory stemming from the cultural Marxists of the Frankfurt School. Other derivatives of critical theory are the well-known concept of critical race theory and the myriad critical approaches to human sexuality stressing identitarian power struggles.

Emphasizing the sociocultural component of the program, the grant poses a series of questions like: “What do underrepresented youth understand about AI and its role in society?” and “What are the features of an engaging ethics-centered pedagogy with AI?”

It further affirms the program’s divisive attempt at stirring racial consciousness by indicating that “[t]he research design will use ethnographic techniques and design research to study and analyze youth learning.” Emphasizing the different experiences of various racial demographics, “ethnographic techniques” like those employed here are often just veiled attempts to make students think they’re oppressed via the manipulation of statistics.

Young participants were encouraged to view “AI through an ethics-and-equity lens,” which the program suggests is “desperately needed by the digital media and tech sectors as well as the general public.”

With the assistance of “open-source tutorials” from the MIT App Inventor, participants created “their own AI tools” to “gain[] perspectives on the social impact of AI.” This program fostered the release of “products” focusing on “facial recognition, deepfakes, and virtual proctoring software.”

The program further explored “the educational conditions that promote STEM engagement among BIPOC youth and others who have the most to offer and the most at stake in building ethical, equitable, and expressive AI.”

The grant indicates that “[t]he model that has emerged from Understanding AI frames computer science as an expressive medium for storytelling, meaning-making, and social justice.” Through this program and the fostering of “Critical Computational Expression,” the program’s young participants “combine the investigation of key social issues” and “creative expression to produce dynamic digital products … that inform and shift national conversations about STEM and society.”

Artificial intelligence is a burgeoning field of key importance to the global economy and human civilization. All technology is integrated with the preferences of its creator; AI is no exception to this despite its alleged synthetic sentience.

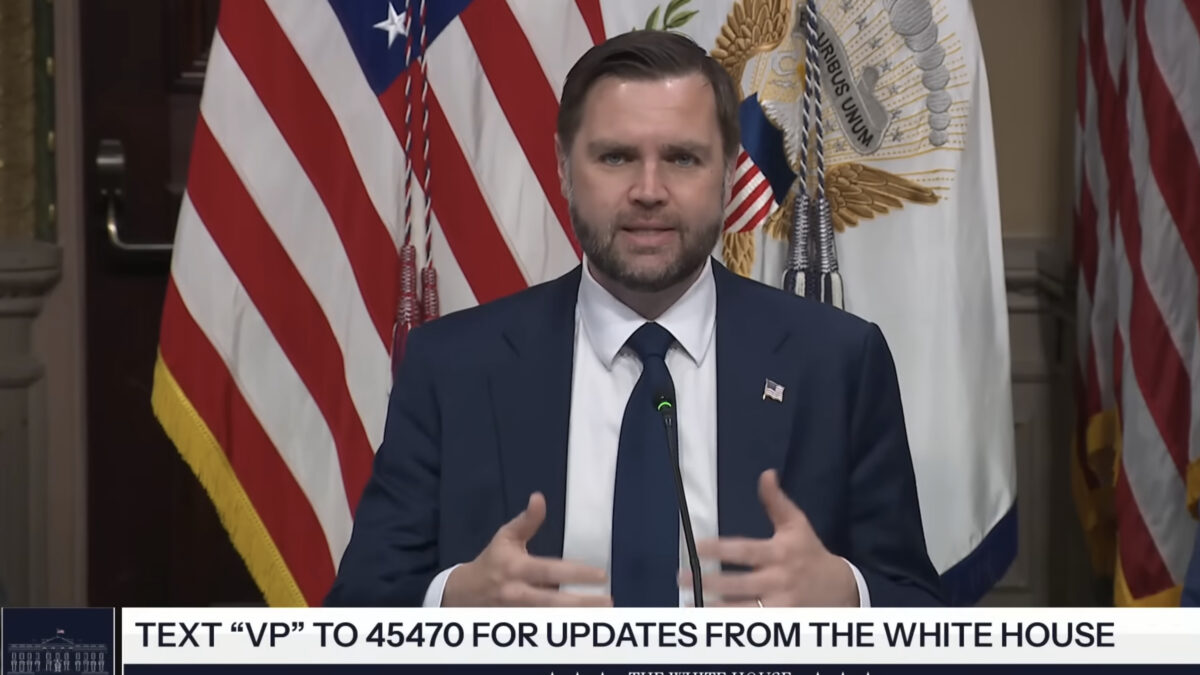

The federal subsidization of programs facilitating the integration of divisive and hateful ideologies in both impressionable youth and technological development presents opportunities for the further erosion of national cohesion and a globally competitive tech sector.

YR Media and Google did not respond to The Federalist to comment on the program by the time of publication.