Last week, sexually explicit AI-generated images of Taylor Swift were circulated on X. The posts garnered tens of millions of views, with commentators reveling in the demeaning spectacle. While the photos were eventually taken down, fake pornographic images of the singer circulated across other websites like Reddit, Instagram, Facebook, and other darker corners of the internet.

Per The Daily Mail, the pop singer is furious and considering whether to sue the deepfake porn site — known as Celeb Jihad — which published the image and others like it.

A source close to Swift told The Daily Mail, “Whether or not legal action will be taken is being decided but there is one thing that is clear: these fake AI generated images are abusive, offensive, exploitative, and done without Taylor’s consent and/or knowledge.”

But Swift is hardly the only victim of “deepfake” — media that has been digitally altered to replace one person’s likeness with that of another — pornography generated by artificial intelligence. For years now, it has been weaponized against women and children.

As loyal Swift fans furiously rushed to report the images and accounts posting them, reports emerged of a 14-year-old London girl who took her own life after boys at her school created and circulated fake pornographic images of her and her fellow classmates. Last year, boys at a New Jersey high school also created and shared AI-generated pornography of more than 30 of their female classmates. Last spring, a Twitch influencer was caught watching deepfake porn of his female colleagues. One of the victims told BuzzFeedNews, “I saw myself in positions I would never agree to, doing things I would never want to do. And it was quite horrifying.”

Thanks to advances in artificial intelligence technology, all someone needs to generate a convincing deepfake video are several photos of their victim and access to Wi-Fi. While some men have been victims of deepfakes, deepfake pornography mainly targets women and young girls. A 2019 report by Deeptrace found that nonconsensual AI-produced pornography accounted for 96 percent of all deepfake content online and that this content solely targeted women. In a letter to the Department of Justice sent last year, Congressman Bob Good alerted the DOJ to reports of AI being used to “generate obscene, personalized images of minors under the age of 18.”

The Washington Post reported that 80 percent of respondents in a poll conducted on a dark-web forum said they had used or intended to use AI to create child sexual abuse materials.

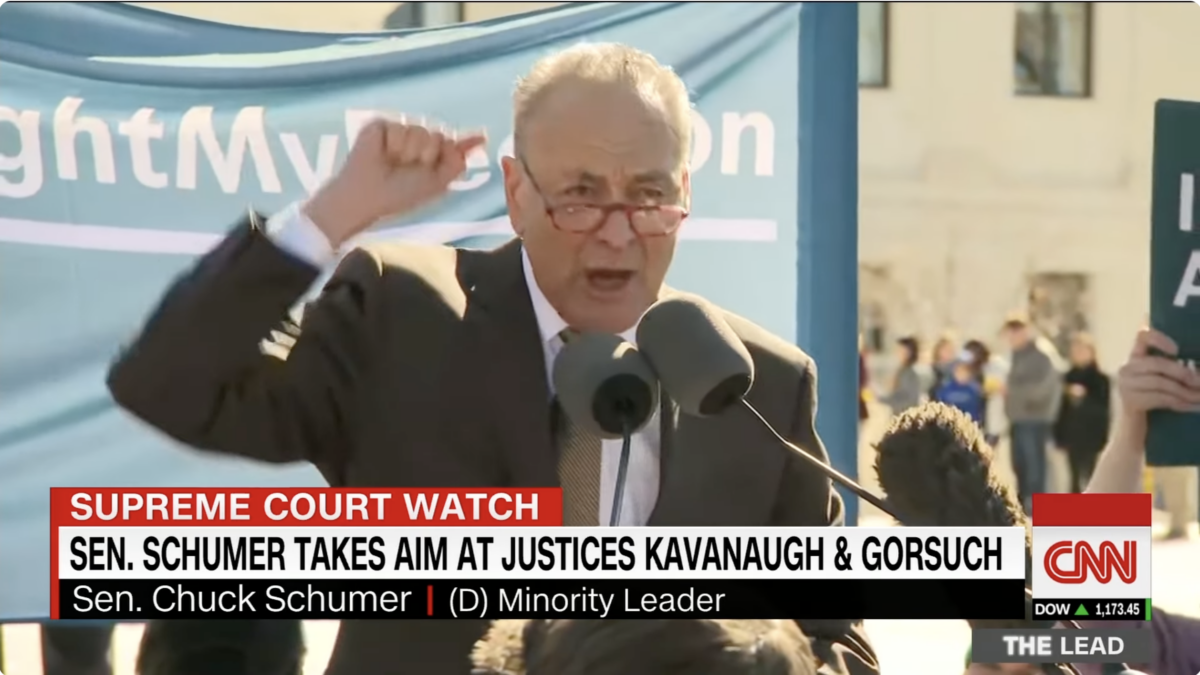

While nine states have laws against the creation or sharing of nonconsensual deepfake imagery, nothing exists at the federal level. In fact, there are no federal laws on the books regulating AI, despite a gamut of legislation currently languishing in committee.

Earlier this month, Democrat New York Rep. Joe Morelle introduced the Preventing of Deepfakes of Intimate Images Act, a bill meant to stop the dissemination and proliferation of deepfake pornography. Perhaps the recent Taylor Swift controversy (much like Ticketmaster-gate of yesteryear) will generate enough political pressure to bring this bill to the House floor.

But some experts don’t think a deepfake prohibition will be enough.

“We need a change to Section 230,” said Jon Schweppe, policy director at American Principles Project. “Pornography as a whole should not have any sort of immunity from civil liability granted by the federal government. With reforming Section 230, you could add an amendment that’s specific to AI-generated content or deepfakes.”

Reforming Section 230 of the Communications Decency Act, which shields internet platforms from liability for what users post, has long been an aspiration for conservatives. Social media sites like Twitter and Facebook benefit from Section 230’s protections by not being liable every time a user publishes illegal content. But the same is true for the porn industry, as much of the content uploaded to their sites is unlawful, such as child pornography, revenge porn, or nonconsensual pornography.

“AI companies have no responsibility whatsoever because they can just say, well, the user of the AI created it,” Schweppe said. “You’re just going to have to impose mass liability on these companies to try to slow this stuff down because right now the AI companies are just fine. Like, it doesn’t bother them. It doesn’t affect them at all. And it’s almost impossible to track down the initial person who created and spread that clip for defamation.”

Another potential solution is age-verification laws or similar laws that hold porn websites liable unless their users upload government-issued IDs proving they are 18 years of age or older. Eight states have already passed age-verification laws, with more to come in 2024, according to Schweppe. And instead of complying with the requirement, Pornhub has ceased operations in several of the states.

Rep. Greg Steube, a Florida Republican, has a similar idea for combating underage pornography that could also apply to deepfakes: remove Section 230 protections from porn sites that are unable to verify whether their content features minors. Sen. Mike Lee, R-Utah, has a parallel proposal, although it does not involve Section 230.

Schweppe says such a move could have a chilling effect on generative deepfake pornography targeting minors.

“It’s another route for telling sites you can’t have this content, and then hopefully, we’ll see less,” he said.

Washington has a tendency to ignore problems harming the little guy. But now that someone with power and influence has been affected, it’s increasingly likely politicians will get serious about combating such harmful technology.