A video leaks of a CEO of a Fortune 500 company. Right before a press conference, he’s seen chatting with reporters and making sexist comments about his coworker. The video goes viral, and shareholders demand that he step down immediately. The CEO vehemently denies ever saying anything derogatory, but the tape seems damning.

But something’s not right. His lips don’t match his words. None of the reporters react negatively to the comment. His face glitches occasionally.

A reporter at the conference releases his recordings from the event. Not only did the CEO say nothing nasty about his coworker, but he also said nothing about her at all. The audio doesn’t match, although it sounds like the CEO’s voice.

Several computer scientists study the video and determine that it’s been doctored. The video is a deep fake.

What’s a Deep Fake?

The situation above is hypothetical but could be commonplace in a short time. Think of deep fakes as Photoshop on steroids. Anyone with enough programming knowledge can seriously alter pictures, video, and audio to match the narrative he or she wants to create. The doctored video and audio look and sound real.

A recent paper titled “Deep Fakes: A Looming Challenge for Privacy, Democracy, and National Security” addresses deep fakes’ potential impact. The authors, Bobby Chesney and Danielle Citron, spoke at a Heritage Foundation event about the ramifications of this emerging technology. They recognized the difficulty in speaking in hypotheticals but wanted to avoid merely reacting to this new technology.

In their paper, they cite the University of Washington’s deep fake. The university demonstrated how seeing can stop believing by creating a video of former President Barack Obama saying things he never said. Because of the abundance of video and audio footage of him, researchers had ample resources for their next dupe.

This new technology is not only advancing but becoming more readily available. Chris Bregle, an engineer for Google AI, noted at the event that a Reddit user has already posted code for deep fakes on the forum. Pairing deep fake tech with the high-speed Internet will disseminate “fake news” like never before.

Scary Implications for Politics and Porn

The panelists mentioned several hypothetical situations where deep fakes could seriously harm American interests. The night before an election, opponents of a presidential candidate could release seemingly damning video of that candidate, swaying the vote their way. By the time the video is debunked and the truth gets out, the election is over, and people are still certain the candidate is guilty.

Additionally, the paper states that it’s not far-fetched to imagine countries like North Korea or terror groups like ISIS using deep fakes for propaganda. With some digital slight of hand, video of American troops delivering aid to war-torn areas could become video of American troops harming civilians, creating further unrest.

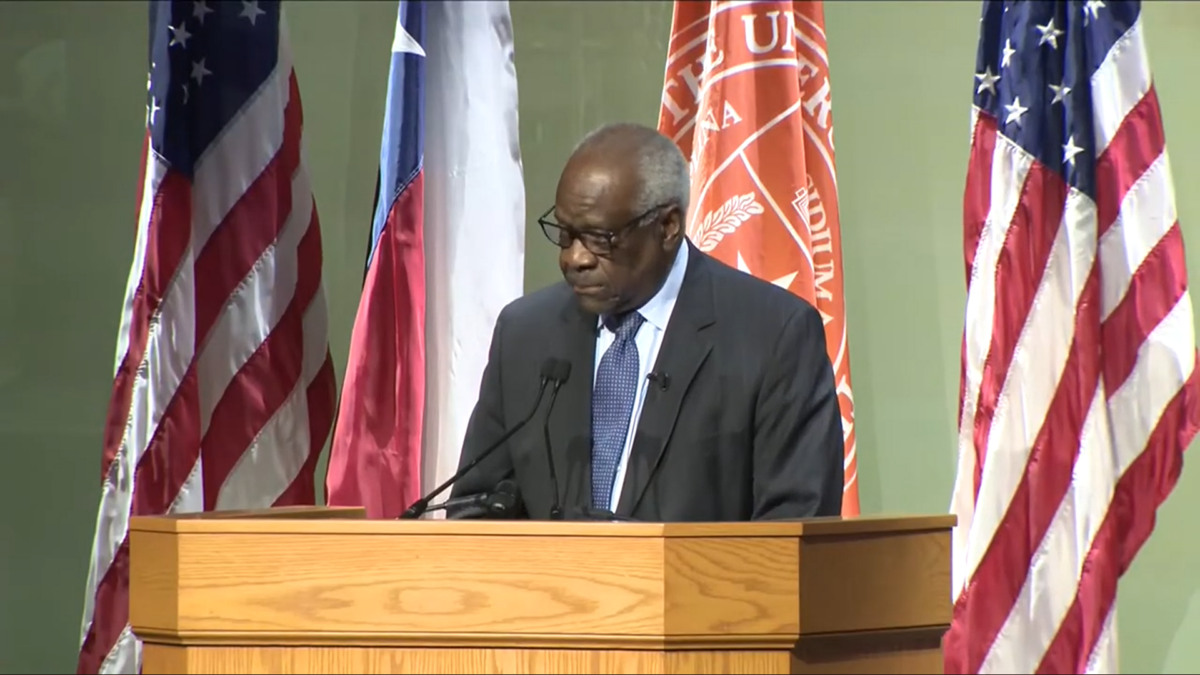

Sen. Marco Rubio spoke at the event, and highlighted the instability that deep fakes could cause.

“In the old days, if you wanted to threaten the U.S., you would need ten aircraft carriers and long-range missiles… Now, you just need access to our Internet system,” said Rubio.

Rubio noted that a country like Russia, which attempted to influence the 2016 election and damage American interests, would love to wield this technology against the United States. Facebook ads and Twitterbots are nothing compared to faked video or audio of American leadership.

This technology has already hit closer to home. The concept of revenge porn — leaking nude pictures of ex-girlfriends without their consent — is already familiar. According to Citron, who has spent hours scanning Reddit for this abuse, jilted ex-boyfriends are excited about the opportunity deep fakes offer to humiliate past lovers.

By fusing pictures and video of their former partner onto that of an onscreen prostitute, these Reddit users can create convincing footage that appears to depict their girlfriend appearing in pornographic films. This as the first search result that pops up after googling her name would not only wreak emotional havoc on the woman, but also harm her professionally and socially.

How to Maintain Privacy and Truth

With the new reality of deep fakes approaching, some prominent CEOs and politicians might try to avoid the mess altogether by recording almost every second of their lives. That way, if video breaks, they can refute it outright. This strategy would have scary implications for privacy. Starting from the top, corporations will want to nip deep fakes in the bud.

Many people won’t lose any sleep if Bill Gates has to monitor more of his interactions to avoid controversy. However, these policies have a tendency to trickle down. Surveillance at corporate level could become expected at a regional level. The panel spoke often of the “unraveling of privacy.” People often demand cautionary measures, and then abstaining from them would become suspicious.

Why aren’t you recording yourself? What have you got to hide? Insurance companies have already adopted this tactic with devices that track your car’s speed and with health tracking initiatives. It’s normal for them to have that data.

Additionally, the threat of deep fakes could shield prominent people who actually do say awful things on camera. Chesney and Citron dub this the “liars’ dividend.” Again, a video leaks of a CEO saying sexist remarks. He denies it. This is one of those deep fakes, he says, motioning scare quotes with his hands. Opponents within his company or lobbyists outside crafted the clip to make him look bad.

Who should people trust? What’s true when seeing might no longer be believing? Truth may decay as a result of deep fakes. As the oft-cited cliché goes, a lie can make it around the world before the truth gets its pants on. Even after retractions are printed and someone determines what’s real and what’s not, the story that leaked will be the one with staying power.

What Should We Do?

Deep fakes pose significant threats. But these threats shouldn’t have the final word on the future of deep fake technology.

The paper states outright that full bans won’t work. That’s because, as with Photoshop, deep fakes aren’t inherently bad. They have many positive uses in arenas such as education, art, and improving video quality. They aren’t solely used for undermining democracy and ruining the lives of ex-girlfriends. Additionally, banning them would infringe on a form of speech, an unconstitutional and dangerous act.

However, those real threats remain. Bregler was by no means Pollyanna about the harm deep fakes could cause, but he was optimistic. Much like how society has adapted to Photoshop and the Internet in general, we’ll adjust to the reality of deep fakes, he says.

Current detection software is better at spotting deep fakes than deep fakes are at pretending to be real. Bregler also mentioned engineering colleagues whose proofs indicate detection software is always better than the deep fakes themselves. The technology behind the problem is the technology that solves the problem.

Overbearing government regulation, which may seem like the best option in the face of uncertainty like this, won’t effectively manage the problem but instead stifle innovation and infringe on speech. Dealing in hypotheticals is tempting, especially in the face of uncertainty, but that uncertainty shouldn’t have the final say in policy.

We often forget that the Internet at its conception was a scary idea, with a lot of unknowns about how it would affect national security, business, and individuals. A laissez-faire stance towards it has not only helped foster its growth but has also invented means to stop its potential wrongs. Deep fakes leave us with many unanswered questions. Yet we shouldn’t let the fear of what could be prevent the innovation ahead.

Update: On July 30, Reddit sent the following statement in response to this article: “As of February 7, 2018, Reddit made two updates to our site-wide policy regarding involuntary pornography and sexual or suggestive content involving minors. These policies were previously combined in a single rule; they will now be broken out into two distinct ones. Communities focused on this content and users who post such content will be banned from the site.”