The bubble hasn’t popped yet, but the writing is on the wall. America’s higher education system is bloated and ineffective and needs a dramatic overhaul. The good news is that it will get one. Unsustainable things eventually cease. The bad news is that Americans may have learned the wrong lessons about the four-year university degree. They are in danger of deciding that it’s simply a waste of money, when the reality is that a sensible approach to the extended liberal arts education requires more elitism than people today want to accept.

Americans are converging on two areas of consensus concerning higher education. First of all, they want it to be more affordable. In a recent Gallup survey, 74 percent of respondents said that the cost of higher education is beyond the reach of the average American; a 2011 Pew survey had similar findings. Secondly, they want college to be more reliable in preparing graduates for the workplace. In a slow economy youth unemployment will always be a problem, but this problem will only be worse if, as many contend, our education system is not targeted on the needs of employers.

The American love affair with college education has lasted a surprisingly long time, but like every forbidden passion, it must eventually end. Ballooning educational costs and a sagging job market make for an ugly combination, and nowadays everyone knows a few deeply indebted young people who are struggling to make their bank-breaking loan payments with a retail job or a part-time nanny position. Education is clearly crucial to an information economy, but we need to find cheaper and quicker ways to make people employable. Young adults should be establishing households and starting families, not working as the indentured servants of a bloated university system.

The obvious online solution

Fortunately, the solutions to these problems are as obvious as they are readily available. We live in an age of technology. Communicating information is easy. Of course, pedagogy is more than just a data transfer, but given a little time, today’s technological wizards should be able to develop effective ways of teaching useful subjects through affordable online programs. Young people can then credential themselves from home through targeted programs that will leave them with minimal debt. This idea has given rise to discussions of the “ten thousand dollar degree,” which has been promoted by education-interested figures like Bill Gates, and by politicians like Rick Perry.

If online education can deliver on these promises, it will break the bachelor degree’s hammerlock on the labor market, and the value of a four-year college education will plummet. At present, four-year universities are staying afloat because their degrees are still the admission ticket that enables graduates to be considered for competitive jobs. This works out well for employers so long as bright, ambitious young people continue going to college. Employers can trust undergraduate education to sort out the best prospective hires, and this gives them a cheap and legal way to narrow the field. But if intelligent young people start opting out of the system, employers will have to adapt, for fear of losing valuable talent to competitors. Universities will find themselves scrambling to justify their enormous consumption of resources. They may find that the public is unimpressed.

As online education begins its climb into the mainstream, expect the anecdotes to start percolating outwards. This young man took two years’ worth of online courses, and was earning six figures by the age of twenty-five. That young woman did an online pre-law program for six thousand dollars, and just made partner at a top firm. It won’t take people long to get the message: you don’t need college anymore to succeed. Young people will always value opportunity, but it’s safe to say that most will prefer the road to opportunity that doesn’t begin with a $100,000 tollbooth. After decades of broadcasting the message that college is for everyone, we may quite suddenly find ourselves wondering whether anyone should go to a traditional four-year college.

What will happen to the bricks-and-mortar university on that day? Will our proud campuses, with their towering scholastic libraries and ivy-covered arches, be auctioned for office space? Will college professors scramble to find jobs in the new economy, availing themselves of the same retraining methods that have rendered them obsolete? Striding across a bustling university campus in the fall of 2013, these predictions may seem radical; college is so entrenched in American culture that it is hard to imagine it going into decline. But culture is a flexible thing, and the times are clearly a’changin. To our grandchildren, college sweatshirts and decals may seem a quaint anachronism of a fondly-remembered past, much like top hats or buggies.

The drawbacks to the university’s demise

Conservatives in general show little inclination to grieve over the impending collapse of higher education. This is understandable. The universities have been very successful at creating a liberal bias among America’s influential elite, and that fact has long darkened the horizons of conservatives’ political future.

Even so, there may be drawbacks to the university’s demise. Its legacy is an imperfect one to be sure, and its academic class has become far too accustomed to living off the fat of the land. But there are benefits to an established university system that a streamlined and employer-focused online education won’t easily be able to capture.

To put the point in a nutshell: does anyone benefit from a traditional four-year college education? If it went away, or came to be seen as a luxury tailored to a wealthy elite, who would be worse off?

When I raise this question with my own undergraduate students, they generally emphasize the value of college as a coming-of-age experience. This strikes me as a poor justification for the four-year university. Before the mid-century expansion of higher ed, people came of age without taking on $80,000-worth of debt in the process. If college is valued primarily as a social experience, we should close down most of the four-year colleges and open more youth social clubs. People could come of age for the cost of a gym membership, not the cost of a house.

Nevertheless, my students’ instincts are not completely off base. They are right to suggest that our educational expenditures can be justified, if at all, only through the complete impact that a four-year education can have on the student’s character. Higher education should be a formative experience, both intellectually and morally, and should leave students better equipped to tackle a whole range of possible challenges that the future might bring.

If such a transformative experience is possible, it might justify the elaborate set-up of the bricks-and-mortar university. The very experience of packing up, saying goodbye to old friends, and relocating to a new environment, prepares the student for a period of intellectual and moral growth. The university campus can (at least in principle) foster a kind of commitment and scholarly focus that would be difficult to achieve from a computer console in mom and dad’s basement. Early adulthood is a formative age, since it is the time when young adults come to appreciate their place in society and even in history. It is good for the movers and shakers of society to have spent those formative years broadening and disciplining their minds. We should want our leaders to have a general understanding of science, mathematics, economics and history, and of the political and philosophical principles that are foundational to our society. In short, we should want them to be liberally educated.

This is why we have universities. They have always been the nursery and playground of the elite few, ever since Plato’s Academy where (according to legend) only geometers were permitted to enter. At one time, it was understood that universities were tasked with forming cultured, humane and rational human beings, who could then serve society in a variety of ways as circumstance and inclination might dictate. University professors were simultaneously the guardians of our Western heritage, and the cultivators of tomorrow’s leaders and researchers and visionaries.

The language of that commitment to human excellence is still sprinkled through college promotional literature, and university presidents can produce it on demand. But the reality, today, is a bit more crass.

To a considerable degree, the university has become the victim of its own egalitarianism. Liberal progressivism has, to be sure, been poisoning the well for quite some time, and this alone would probably have prevented us from developing a fully satisfactory liberal arts education outside of a few select cultural enclaves. But the really insoluble problems date back to the mid-twentieth century, when the foundations of America’s great egalitarian experiment were laid.

Distorted incentive structures

The Greatest Generation was simultaneously pro-excellence and anti-elitism, and it was optimistic. It no longer seemed acceptable to leave university education as a privilege geared towards a tiny elite. Everyone should be able to enjoy the fruits of the liberal arts education. Thus, systematic efforts were begun to make college education available to everyone. Measured by its own standards, this project was wildly successful. By 2010, about 70 percent of high school graduates were going on to college.

Distorted incentive structures can partially explain why this experiment has failed so dramatically. So long as we are willing to funnel massive funding (both public and private) towards higher education, universities will always have an incentive to attract and retain students. They have no such incentive to ensure that students learn, and indeed, the existing incentive structure mostly militates against rigorous pedagogy. Student retention is a critical goal for almost every college or university; after all, a student who drops out will no longer be paying tuition. And it turns out that the best way to keep everyone in the pack is to hike the pace of the slowest hiker. Student evaluations are an effective mechanism for ensuring that professors cooperate with this broader student-retention strategy. Anyone who doubts the seriousness of that institutional pressure should try telling an untenured professor that his evaluations from the previous semester included multiple complaints that the class made students “feel stupid.” Witness the hunted, desperate expression that passes over the instructor’s face.

Perhaps we should not be surprised that, as Richard Arum and Josipa Roska reported in their book “Academically Adrift,” undergraduates today study only half as much as their parents did in the 1960s, and devote nearly four hours to socializing for every hour they spend with their books. No one in the present system has much motivation to push academic standards anywhere but down. Depressingly, Arum and Roska also conclude that a significant percentage leave college having learned little or nothing. Here at last, we see the results of our grand educational experiment. Our grandparents wanted to ensure that everyone could have the benefit of a college education. We obligingly built a system so inclusive, you don’t actually need to go to college to capture the benefits.

Incentive structures can be improved, of course, and some efforts are already being made to hold universities more accountable for pedagogical effectiveness. How much, though, can we expect from students who were merely average in the first place? At some point, the Lake Wobegon effect will limit what the non-elite university can accomplish, and overly stringent efforts to pressure students may in the end be counterproductive.

The costs of “college is for everyone”

This point has been made quite effectively by Charles Murray in his recent article on the four-year bachelor’s degree. Murray wholeheartedly agrees that advanced study of the liberal arts can be enormously valuable for those who willingly apply themselves. A liberal arts education can instill mental discipline and moral growth. These benefits, however, cannot be imposed by force.

In general, Murray suggests, people will apply themselves with eagerness to material that challenges them without exceeding their intellectual ability. Learning is fun, but confusion is not. We should expect, therefore, to find a strong correlation between intelligence and the eagerness to tackle a truly ambitious liberal arts curriculum. For a minority of people, the unabridged liberal arts program might be a broadening and life-changing experience that uniquely enables them to realize their potential. Most people, however, will find that experience frustrating and demoralizing. Those people will do better if they spend their formative years developing other talents and attaining more targeted areas of expertise.

As Murray points out, the “college is for everyone” ideal carries a human cost.

Most young people do want a college degree, but for many, the quest will end badly. Even despite our aggressive retention efforts, Murray reminds us that many of those who matriculate will end up leaving college with substantial debt and no degree. Scholastic failure is a bruising, demoralizing experience in a college-oriented society. People who might have spent their twenties as happy, productive citizens working respectable jobs and raising families, instead find themselves indebted, unemployed and drifting.

We find ourselves, therefore, in a precarious situation. For decades we have been funneling young people through a carefully engineered system that rewards them for taking the scenic route to the workforce. So long as this path was affordable, and reliably directed travelers to a secure middle-class lifestyle, the inefficiencies of the arrangement could be overlooked. That day has passed. Higher Ed is now placing an intolerable burden on young Americans, who will already have to contend with ballooning debt, a weak economy and wildly unsustainable entitlement commitments that their parents voted them before they were even born. Reforming higher education truly is the least that we can do to smooth that rocky road.

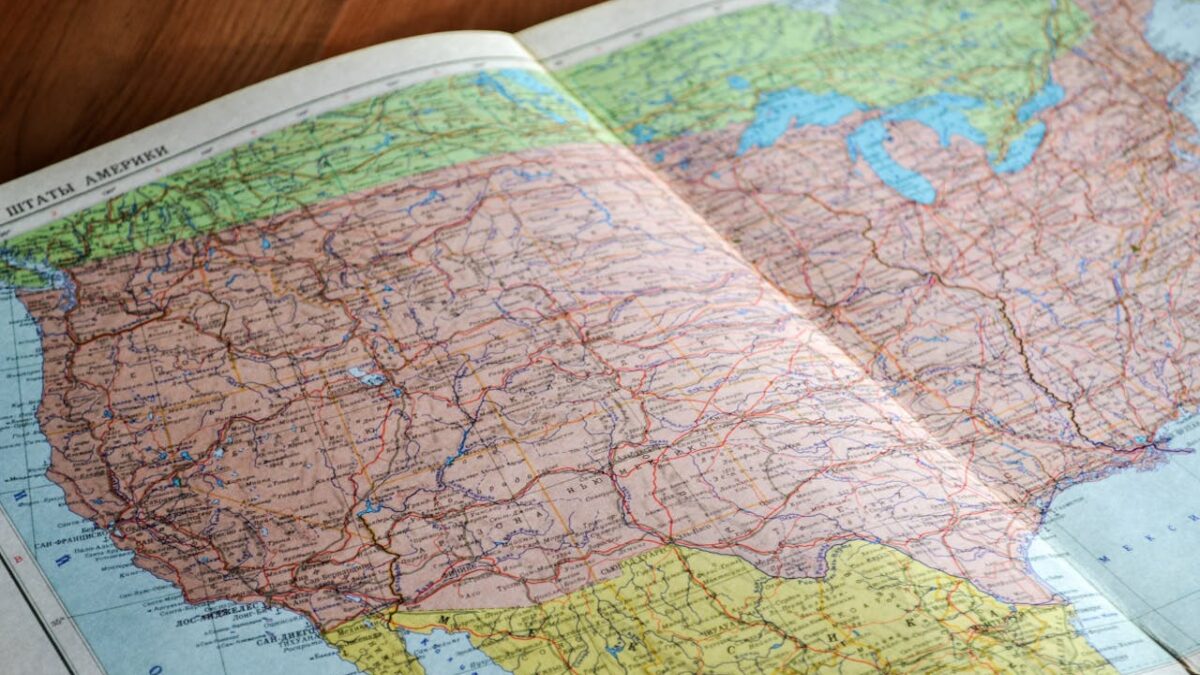

In our eagerness to lighten the load, however, we must be careful not to drop the map. If indeed our society is forging into unfamiliar terrain, we can ill afford to lose perspective on where we have been, and where we might be going. More than ever, we need our most influential citizens to be steeped in a strong understanding of how the world works, and in the philosophical and political principles that can protect us from the darker potentialities of human society. We need prudent, yet inspired individuals, who are capable of building a society that is not only prosperous, but also just, humane, and committed to human excellence. We need liberally educated leaders.

Elitism is distasteful to the citizens of democratic societies, but disguising it is a luxury we can no longer afford. We still need universities, but we need them to be, as in most periods of history, the domain of a relatively small group of people. Young people who are eager and intellectually capable should be encouraged to get a serious liberal arts education, and rewarded with opportunities that justify the investment. Those who are not should be directed to more targeted educational pathways that will enable them to find decent employment with a minimum of debt. Everyone should enjoy the fruits of a serious liberal arts education, but some may need to enjoy them less directly than others.

Rachel Lu teaches philosophy at the University of St. Thomas.