Judge Terry Doughty’s historic opinion in the censorship case Missouri v. Biden has been getting a lot of attention, especially after the Fifth Circuit Court of Appeals affirmed it. Rightly so. Now the U.S. Supreme Court says it will hear the case. This could be the most important Supreme Court term for free speech ever.

But there is another case that could have an even bigger effect on the freedom of American speech: Robert F. Kennedy Jr.’s case against Google, which has repeatedly censored Kennedy’s political speech on YouTube. With Kennedy launching an independent bid for the White House — and with Google doubling down on its censorship efforts — that lawsuit has taken on added importance.

After a hearing this week, Kennedy’s case could also soon be headed to the Supreme Court, which will have to grapple with the extraordinary efforts Big Tech has taken to silence government critics like Kennedy, and the danger created by the tech industry’s belief, shared by many government officials and legal scholars, that more censorship is needed. (I should note that I am one of the lawyers leading the Kennedy v. Google case.)

Before the 2016 election, Big Tech rarely censored anybody. They had tools that allowed users to block content they did not want to see. And they used algorithms to screen out obscene content. Otherwise, cyberspace — starting with the internet and then extending to the social media sites created for smartphones and tablets — was a public forum, the digital equivalent of a town square, a place where people could speak freely and debate the issues of the day, whether those were political, social, or cultural issues or anything else. For the most part, content moderation policies either didn’t exist or were designed to block violent conduct. They were not used to censor speech on political and social issues.

Indeed, during 2010 and 2011, social media websites played an important role in the uprisings dubbed the “Arab Spring.” YouTube even made an exception to its ban on violent content by allowing people to post violent videos from the Middle East uprisings if they were educational, documentary, or scientific (whatever that means). That policy lasted until 2014 when, in response to violent postings by ISIS (notably the beheading video of journalist James Foley), YouTube reversed course and banned content posted by terrorists and other “dangerous organizations.”

Still, before 2016, online content moderation policies focused on blocking violent and harassing content. Most Americans had no problem with that. Then two events rocked the political establishment.

‘Misinformation’ and Covid

First came the United Kingdom’s June 2016 vote to leave the European Union. Then came Donald Trump’s victory in the U.S. presidential election in November 2016. Both had been deemed improbable. Both appeared to show deep dissatisfaction with the political status quo. Thus, the political establishment developed an alternative explanation: that misinformation online deceived voters, tricking them into voting the “wrong” way (for Brexit and Trump, that is).

Then came the Covid-19 pandemic and a new era of online content moderation. For example, Facebook blocked posts that promoted protests of government “stay at home” orders, saying that “events that defy government’s guidance on social distancing aren’t allowed on Facebook.” As I wrote back in April 2020, those actions showed an extraordinary disregard for civil liberties. Equally appalling was the fact that supposed civil-rights advocates like the ACLU went along with them.

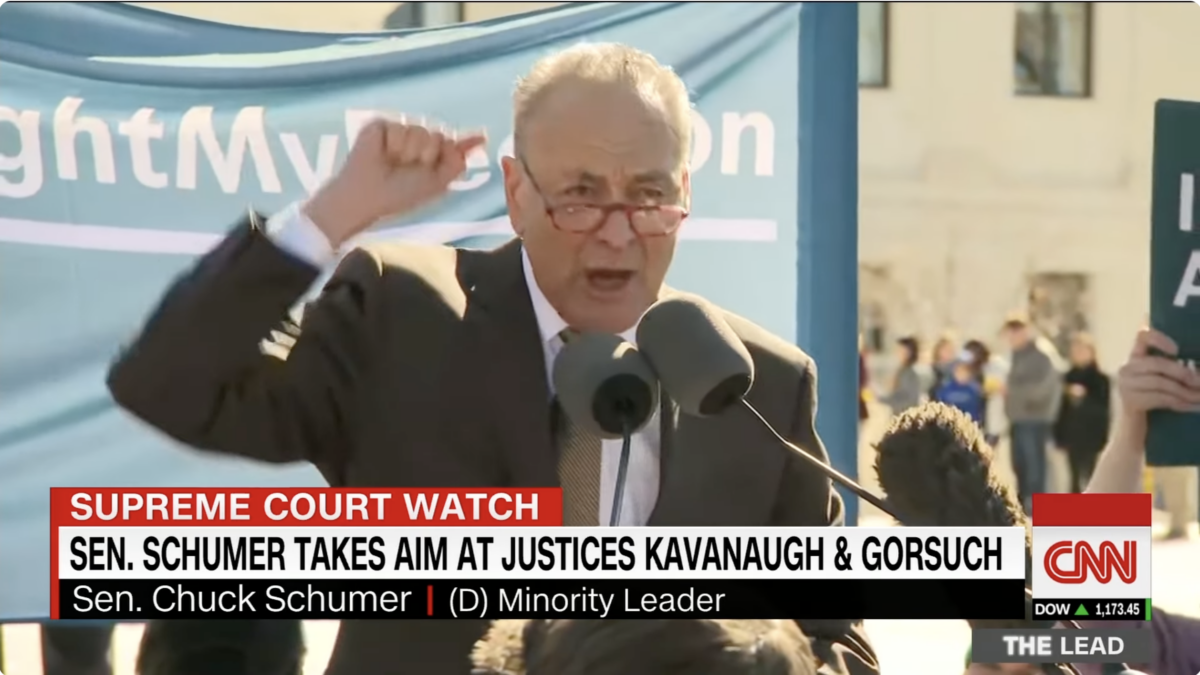

But that was just the start. By 2021, tech giants had removed Trump, the sitting U.S. president, from their platforms. The demands to censor speech online increased — especially after Joe Biden took over the White House.

Within days of Biden assuming office, White House officials were pressuring Big Tech to censor dissenting viewpoints, including those of Kennedy. Kennedy had become a prominent critic of government health officials, especially Anthony Fauci, the chief medical adviser to the president.

As Judge Doughty and the Fifth Circuit explained in Missouri v. Biden, the White House’s censorship campaign picked up speed during the first six months of the Biden administration. It focused on public health critics like Kennedy, who challenged the federal government’s efforts to boost Covid-19 vaccination rates, but expanded to censoring people who disagreed with public health officials about various topics.

For example, the current version of YouTube’s “medical misinformation” policy added a wide range of topics that are subject to removal from YouTube. The new policy spans multiple pages — and Google says it “isn’t a complete list.”

Why Should Government Determine ‘Truth’?

Like the first version, YouTube’s new misinformation policy looks entirely to government sources to determine what information gets removed from YouTube. It prohibits people from saying anything “that contradicts local health authorities’ (LHAs) or the World Health Organization’s (WHO) guidance about specific health conditions and substances.”

That is an important part of Kennedy’s lawsuit against Google. The people who support these content moderation policies often justify them by arguing that Big Tech companies are private entities that can do whatever they want. But what happens when the tech companies rely entirely on the government to decide what content gets removed? Why should the government decide what is true and what is “misinformation”? After all, the government cannot directly regulate false speech. The Supreme Court made that clear in N.Y. Times Company v. Sullivan. If the government can’t directly regulate this content, why should the Big Tech platforms be allowed to rely on the government to regulate it?

A Public Forum with Free Speech

Of course, the cyber-left would again mention that the tech platforms are privately owned. But, just a few years ago, in Packingham v. North Carolina, the Supreme Court called the internet the “modern public square.” Similarly, in 2019, the Supreme Court’s four liberal justices claimed that “[t]he right to convey expressive content using someone else’s physical infrastructure is not new.” And the tech giants certainly seem like the type of public forum (town squares and sidewalks, for example) that have always been open to free speech, regardless of who owns them. Indeed, the surgeon general calls them a digital environment.

The cyber-left would say that nobody has designated any tech platform as a public forum yet. Haven’t they, though? Wasn’t that the point of the Communications Decency Act of 1996? After all, that was the law that provided tech platforms with immunity for agreeing to host the speech of the broader public — a global community message board, if you will — instead of being treated as the publishers of speech. The modern internet would not exist without that law.

In any event, nobody ever declared that town squares and sidewalks would be the place for people to gather and speak about the issues of the day. They developed organically, in response to the populace. Hence the Supreme Court’s 1939 statement that “[w]herever the title of streets and parks may rest, they have immemorially been held in trust for the use of the public and, time out of mind, have been used for purposes of assembly, communicating thoughts between citizens, and discussing public questions.” The same is true of cyberspace, once dubbed the “Information Superhighway.” That may have happened faster online than it did with town squares and sidewalks, but it does not make the tech platforms any less of a public forum.

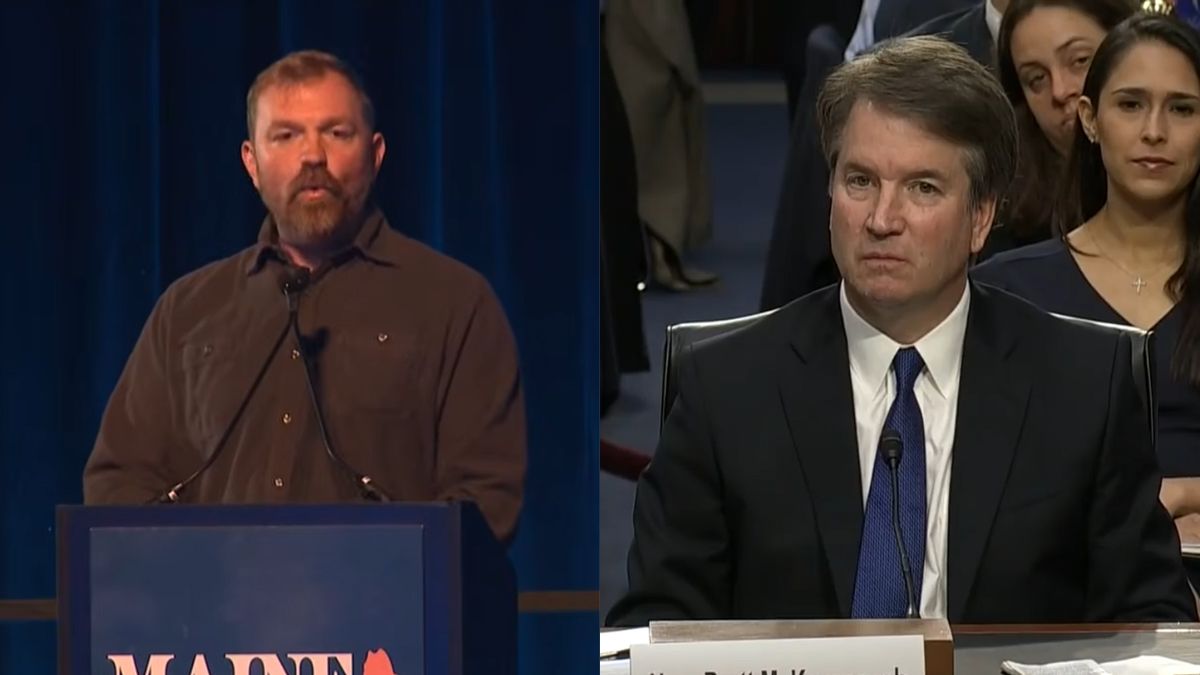

No doubt the Supreme Court will be wary of declaring tech platforms to be public forums. It does not fit the judicial philosophy of conservative institutionalists like Chief Justice John Roberts. Justice Anthony Kennedy, Packingham’s author, would have liked the argument, but he retired and was replaced by a justice (Brett Kavanaugh) who has shown little interest in free-speech issues. In fact, it was Kavanaugh who wrote the anti-free speech opinion in the 2019 case Manhattan Community Access Corporation v. Halleck.

But designating tech platforms to be public forums would be smart. Missouri v. Biden involves the difficult distinction between government speech, which the law encourages, and government coercion, which the law prohibits. That is why the Supreme Court stayed the injunction the Fifth Circuit issued against government officials. Instead of focusing on the government speech versus government coercion distinction, the Supreme Court should recognize that tech platforms are public forums in which viewpoint discrimination is always prohibited but in which otherwise reasonable time, place, and manner restrictions are valid.

And that is why Kennedy v. Google matters. It goes beyond the government speech versus government coercion distinction that may haunt the free speech advocates in Missouri v. Biden. It focuses on what freedom of speech means in the American political process. It’s time for the Supreme Court to consider those issues, and to define what freedom of speech will mean for Americans in the digital age.