If the insurance estimates are to be believed, the economic fallout from the Great Texas Blackout will approach $20 billion. That total approaches the economic carnage from Hurricane Katrina. The latter was the single most expensive disaster in modern times.

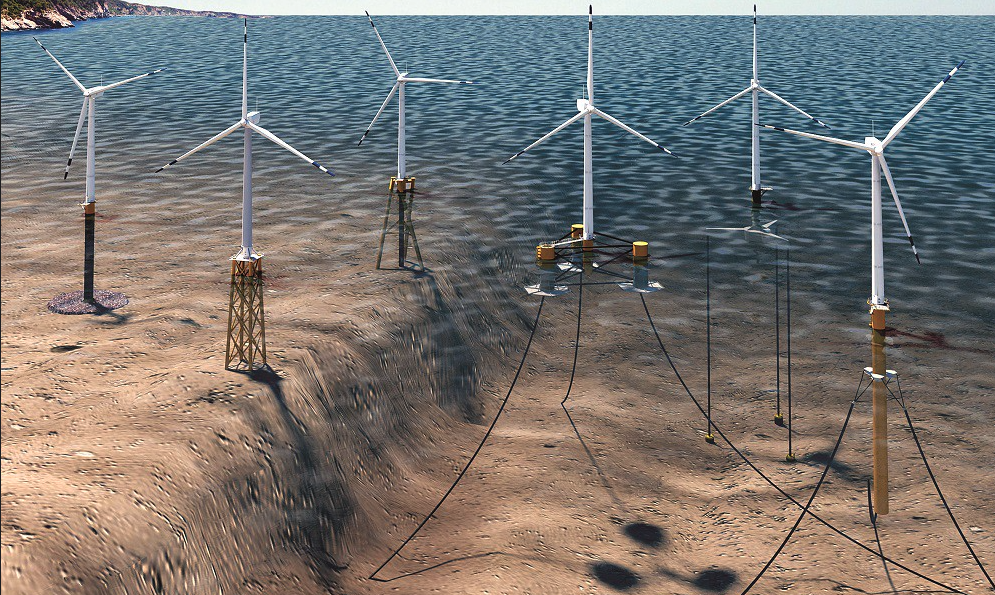

Eventually, forensics rather than finger-pointing will likely confirm what we know now: the Texas grid almost collapsed because of a domino of events. It began with a near-total loss of output from that state’s mighty wind farms. At the center of the debate about how to prevent a next time — with natural disasters, there is always a next time – we find a simple truism: For critical infrastructures, the hallmark of reserve capabilities is “available when needed.”

It should be obvious: Few things are more necessary in modern life than a continuous supply of electricity. Never mind keeping lights on and charging Teslas, continuous kilowatt-hours are crucial for running gasoline pumps, home furnaces, and hospitals, refrigerating food and vaccines, and keeping the internet and wireless networks lit. However, for decades now, policy discussions and spending allocations for electric grids have been framed in terms of producing more green kilowatt-hours rather than more reliability and resiliency.

The electricity infrastructure is uniquely complex. Its challenges are centered on the velocity of invisible grid-scale energy movements. It’s the equivalent of safely controlling supertankers moving at the speed of Boeings. That’s why the National Academy of Sciences declared electric grids the No. 1 technology achievement of the 20th century. Just two decades later, that’s become yesterday’s news.

What could be done to prevent another Great Texas Blackout is easily answered with a simple thought experiment. No outage would have occurred if, say, just a healthy fraction of the total Texas wind capacity had instead been a combination of properly winterized natural gas turbines and nuclear plants. The latter are far more difficult to build, but in the long run, far more consequential.

Indeed, if nuclear fission were just now discovered, it would be hailed as the magical solution for producing electricity using a trivial amount of land and material. One pound of nuclear fuel matches 60,000 pounds of oil, 100,000 pounds of coal, or 1 million pounds of Tesla batteries. Consequently, nuclear machines can run day and night with refueling needed once every couple of years.

That’s why, at the dawn of the atomic age, forecasters were giddy about prospects. Ford engineers circa 1957, amongst others, imagined cars that would go 10,000 miles without refueling, using a reactor they hoped someone might invent. The U.S. Air Force went one enthusiastic step further and spent a decade and $7 billion trying to build a nuclear-powered aircraft. It turns out there are daunting engineering challenges in democratizing the physics of nuclear fission.

So, here we are, with barely 10 percent of the world’s electricity derived from splitting atoms on this 65th anniversary of Calder Hall, the world’s first commercial nuclear plant, inaugurated in 1956 by Queen Elizabeth II. Instead of a massive push to find cheaper solutions for inherently reliable nuclear technology, we see a monomaniacal preoccupation with deploying inherently unreliable wind (and solar) technologies.

Yes, we know some Texas nuclear capacity was tripped offline during the Great Blackout. There was a failure to include cold-weather protection for “feedwater.” Weatherizing is an avoidable glitch, one that’s far easier to fix than the vicissitudes of wind and sunlight.

To fix green unreliability, proponents are pushing grid-scale batteries. For perspective, however, consider what would be required for the Texas grid to handle predictable occurrences of several days without wind or sunlight. The quantity of batteries needed equals a decade’s worth of the entire world’s production, at a cost well north of $400 billion, an amount of money that could build enough nuclear plants to power the entire Texas grid for the next century, not just a few days.

This says nothing about the global emissions associated with fabricating the batteries. Most batteries, and the key materials needed to make them, are produced mainly in Asia (primarily China) on coal-dominated grids. Don’t ignore, either, the gigatons of “energy minerals” that must be mined, along with those attendant environmental and geopolitical costs. The storage of electricity at grid scales is limited by physics barriers, not engineering challenges.

On the other hand, deploying nuclear energy at scale is an engineering challenge. It’s one with a relevant lesson from the history of aviation. Think of today’s nukes as the equivalent of a Boeing 747 or Airbus 380. The scale of such big machines limits widespread utility. Those jumbo jets never accounted for more than about 10 percent of the global aviation fleet. Both were retired from production (pre-pandemic). The smaller, cheaper, far more flexible designs of the Boeing 737 and Airbus A320 comprise nearly two-thirds of global aircraft.

Numerous 737-class designs for nuclear reactors are already emerging. Getting those to market will be determined mainly by sensible regulations and political will. And if politicians are looking for inspirational next-generation energy moonshots, nuclear power may be the only technology where that over-used analogy is apt.

Scientists have known for a long time that nuclear energy is critical for any realistic vision of space travel or habitats, and Mars in particular (the Mars rover Perseverance and its predecessors are nuclear-fueled). As it happens, the technologies for a space reactor would also unlock next-generation earth-bound applications.

Some breakthroughs are needed. While there’s no “predictor function” — to use Bill Gates’ phrase — to know when those will happen, known physics dictates that any such developments are far more likely with nuclear energy than with magical batteries.

There is one unavoidable non-technical challenge: nuclear antipathy among environmental groups. But if they’re serious about an “energy transition,” there’s no possibility of that happening without atomic power.

Meanwhile, if the tech community is looking to be truly visionary, rather than trading emissions credits for wind farms or tree planting, they’d embrace research on next-generation micro-reactors. Such space-derived machines, one-hundredth the size of today’s behemoths, are exactly the scale that would be useful for directly supplying always-on power to a datacenter. The world has thousands of data centers, with thousands more yet to be built for the ever-expanding global Cloud.

Finally, for those who believe the world is in the throes of more frequent extreme weather events, grid resilience and reliability should be priority No. 1. While the “extreme weather” tropes are not supported by data (for an honest reality check, listen to a recent lecture by Steven Koonin, a physicist, and former executive in the Obama Department of Energy), one doesn’t have to argue about global warming to know that weather will always continue to create extreme challenges for grids.

If we continue to ignore the primacy of resilience, many more citizens will learn the hard lessons faced by Texans, and Californians, in recent months. It’s an avoidable disaster.